As technology and computing become increasingly embedded throughout the life sciences industry, more and more medical devices rely on software and network communications to deliver their intended use. Intuitive cloud interfaces and ever-larger datasets powering advanced algorithms can offer real benefits to users and patients—but any software, and any connected network, can introduce cybersecurity vulnerabilities that demand proper safeguards to prevent serious consequences.

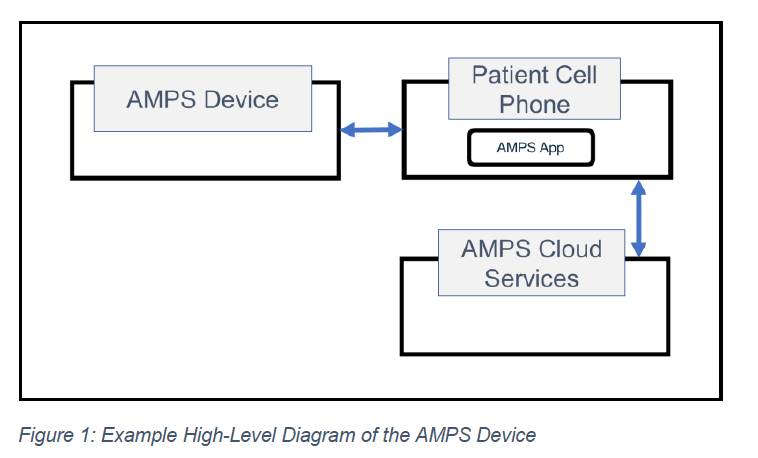

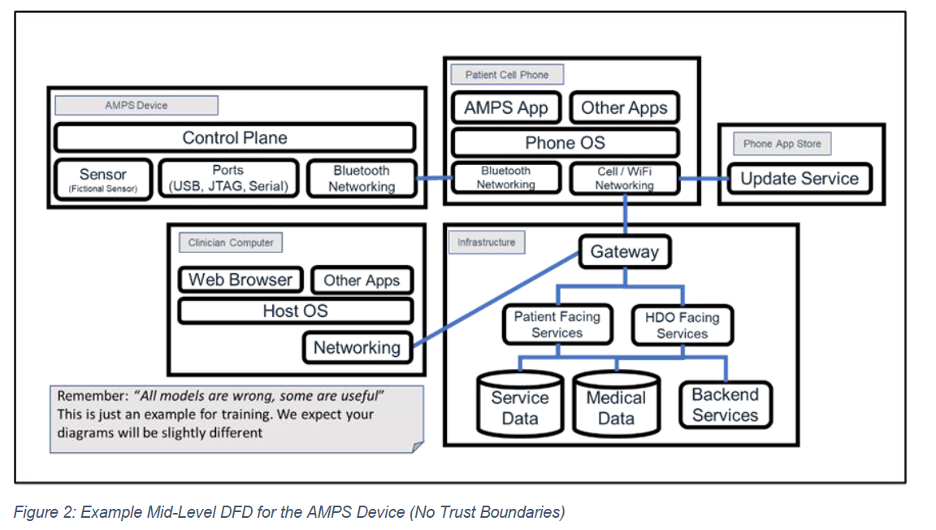

A common modern architecture pairs a device with a smartphone application via Bluetooth, with the application then communicating with a cloud database. This overview will use that exact pattern as the basis for a worked threat modeling example. The mock device referenced below in this post is called AMPS (Ankle Monitor Predictor of Stroke) system.

What Is Threat Modeling, and Why Does It Matter?

Threat modeling is the practice of analyzing representations of a system to surface concerns about its security and privacy characteristics. Performing threat modeling helps you recognize what can go wrong in a system and pinpoint design or implementation issues that require mitigation, whether early in development or throughout the lifetime of the product. The output of the threat model, the threats themselves then inform decisions during subsequent design, development, testing, and post-deployment phases.

The FDA has long recognized the value of threat modeling. The Agency sponsored the MITRE Corporation and the Medical Device Innovation Consortium (MDIC) to develop the Playbook for Threat Modeling Medical Devices, originally published in November 2021, which this post summarizes. Threat modeling has since become an explicit FDA expectation: the Agency’s June 2025 final guidance, Cybersecurity in Medical Devices: Quality System Considerations and Content of Premarket Submissions, calls for threat modeling as part of the security risk assessment for any “cyber device” under Section 524B of the Federal Food, Drug, and Cosmetic Act.

The Four Foundational Questions

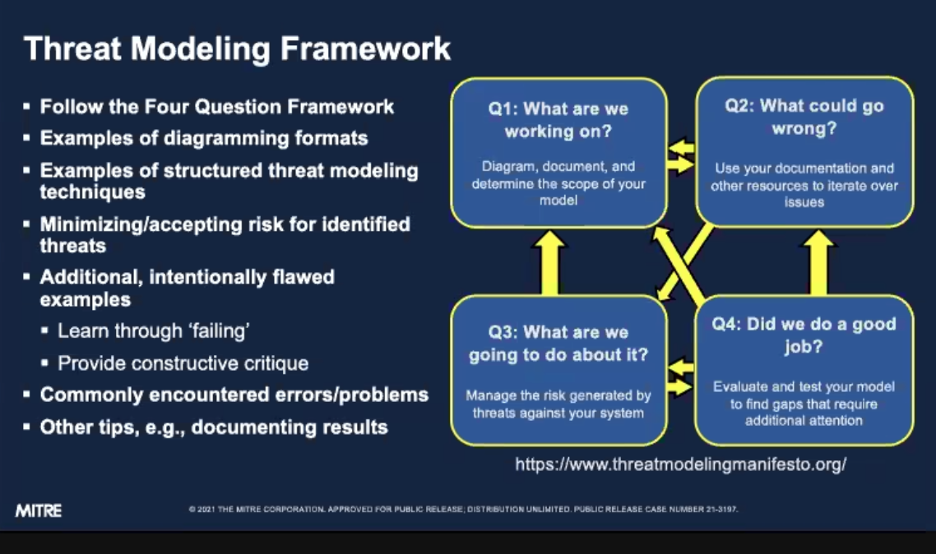

The Threat Modeling Framework is built on four questions, asked in order and revisited iteratively:

- What are we working on?

- What can go wrong?

- What are we going to do about it?

- Did we do a good job?

Answering one question often prompts a revision to a previous answer, so the framework is meant to be cyclical rather than linear.

Question 1: What Are We Working On?

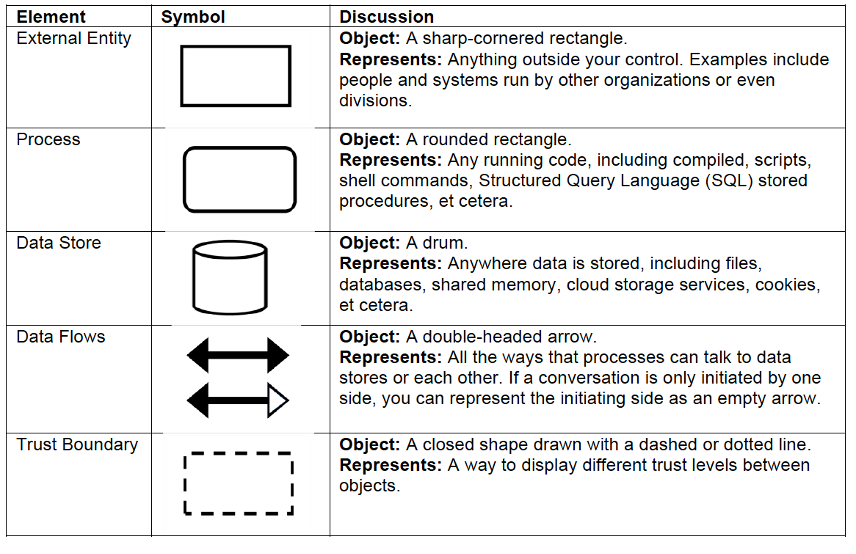

The most effective way to answer the first question is to build a Data Flow Diagram (DFD) that visualizes the system, its entities, and how they connect. A DFD typically uses five standard shapes (described in the Playbook and shown below) to represent processes, data stores, external entities, data flows, and trust boundaries.

The first step in establishing the DFD is identifying the device’s major components (Figure 1). Each component is then expanded with additional context describing its data flows in greater detail (Figure 2).

Mapping data flows between client, server, and database is particularly useful for showing how user credentials are exchanged, how authentication is validated, and where sensitive information moves. It is important to recognize that multiple modeling techniques can apply to a single device; the right approach is whichever combination best meets the manufacturer’s needs and provides the clearest understanding of the system. When mapping data flows, special attention should be paid to:

- Authentication protocols

- Programming and configuration commands

- Obtaining and validating software updates

- Sharing patient data with external servers

- Procedures to restore from backups

Before moving on, ask: Are all core functions of the system represented in the model? Could someone unfamiliar with the system understand how it works from the current documentation? Are critical data flows clearly labeled and described?

Question 2: What Can Go Wrong?

Once the system is mapped, the next step is to identify threats—and to consider how existing controls might fail or be subverted. A solid starting point is the STRIDE model: Spoofing, Tampering, Repudiation, Information disclosure, Denial of service, and Elevation of privilege. STRIDE captures the major threat categories most relevant to medical devices. Table 1 describes each threat type and provides a representative example using the AMPS device.

Table 1. STRIDE threats with medical device examples.

| Threat | Description | Medical Device Example |

| Spoofing | Impersonating another user, device, or service to gain unauthorized access. | An attacker uses stolen clinician credentials to log into the AMPS smartphone application and pull patient data. |

| Tampering | Modifying data or code without authorization, in transit or at rest. | A modified firmware package alters how the AMPS device interprets sensor data, producing false stroke-risk indicators. |

| Repudiation | A user or system performs an action without sufficient evidence to prove it occurred. | Stored patient records are altered and the system has no audit trail showing who made the change. |

| Information Disclosure | Exposing protected information to a party that should not have access. | Patient health data is transmitted between the AMPS device and the phone over an unencrypted Bluetooth channel. |

| Denial of Service | Degrading or interrupting the availability of a system or service. | Bluetooth jamming or a flood of malformed packets prevents the AMPS device from syncing with the cloud. |

| Elevation of Privilege | Gaining capabilities beyond what the user or process is authorized for. | A standard user exploits a software flaw to obtain administrator-level access to the cloud database. |

As threats are uncovered, they need to be tracked. A simple table that captures all identified threats—both within and outside the STRIDE framework—works well, provided it is updated and referenced as the team’s understanding develops.

One challenge with STRIDE-style enumeration is that it can struggle to “tell a story.” It can also be difficult to prioritize findings, because some threats may already be mitigated by controls elsewhere in the system. Attack Trees address this by adding structure that makes it easier to walk through specific exploitation paths.

Attack Trees can be drawn either top-down or bottom-up:

- Top-down (threat-driven) Attack Trees model the attacker’s actions. The first stage of the attack is drawn as the root node at the top, with possible outcomes and attacker actions branching beneath it. The process is repeated at each subsequent level.

- Bottom-up (fault-analysis) Attack Trees also place the root node at the top, but that root represents an end state the system designers are trying to avoid. Boolean logic (AND/OR gates) is then used to map the preconditions required to reach that state. The diagram is built from the top down but is read from the bottom up, following the path an attacker would take.

A complementary framework is the Cyber Attack Lifecycle, which provides additional structure for both Attack Trees and STRIDE analysis. Its seven phases are:

- Recon – the adversary identifies, selects, and investigates a target.

- Weaponize – the adversary acquires or develops tooling around a vulnerability so it can be executed against the target.

- Deliver – the initial attack is transported to the target. Common vectors include phishing emails, network connections to vulnerable services, and physical media such as a USB drive left in a parking lot.

- Exploit – the initial attack is executed on the target.

- Control – the adversary establishes a mechanism to send commands to compromised software. This stage is common but not universal—a denial-of-service attack, for example, may not require post-delivery control.

- Execute – a catch-all category covering the steps the adversary takes to achieve their objectives.

- Maintain – the adversary preserves their access to the target.

A final consideration in this stage is documentation. How are threats identified, captured, and tracked? What is in scope and what is out of scope? What assumptions are being made? Documentation can take many forms—word processing documents, spreadsheets, diagrams, databases, or a ticketing system. Ticketing systems tend to be most useful for mature, released products rather than products still in development. Most organizations end up using a combination of these tools.

Question 3: What Are We Going to Do About It?

Once threats are mapped and documented, the next step is to address them. There are four primary strategies: eliminate, mitigate, accept, and transfer. The goal of threat modeling is to apply one or more of these strategies to each identified threat, document the rationale, validate the chosen approach, and re-evaluate threats when needed.

When that process breaks down—when threats are missed or quietly “accepted”—the threat itself does not disappear. It is silently accepted or transferred to other stakeholders: the manufacturer, customers, caregivers, or patients. Avoiding silent risk transference is one of the central goals of threat modeling. Some level of risk acceptance and transference is inevitable; the critical step is to document it and provide actionable information to all relevant stakeholders.

Eliminating threats is the most desirable outcome. If a threat does not exist, there is no risk associated with it. Manufacturers should document why the threat no longer exists and move on to addressing threats that can be mitigated.

Mitigating threats means identifying, adding, or improving controls that prevent or limit attacks. Mitigation may involve evaluating existing system features, adding new ones, or adjusting configurable settings. In many cases, an identified threat is already mitigated by controls in place. The key is to document both the threat and the corresponding control. This serves two purposes: future threat modeling activities benefit from a preserved knowledge base, and systems evolve over time, what is mitigated today may not be mitigated after the next update.

Accepting risk is most straightforward when the risk falls only on the organization itself. Even then, a documented policy for approving and tracking accepted risks is essential. Accepting risk on behalf of customers is far more difficult and usually devolves into silent risk transference, which as noted above is what threat modeling exists to prevent. When a risk truly falls on a third party, treat it explicitly as a transfer rather than as an acceptance.

Transferring risk effectively requires documentation, approval processes, and careful attention to liability. Effective security guides, threat modeling outputs that users may need, and clear user interface warnings and cautions all play a role. Transfer also raises legal and contractual questions: What is the company’s responsibility to remediate new threats as they emerge? What is the notification policy when a vulnerability is identified? Who is liable if a system is compromised? These elements need to be addressed in writing.

Question 4: Did We Do a Good Job?

The final question is about effectiveness and completeness. The threat model should be living documentation—updated and refined throughout the product lifecycle. The core test is whether the documentation is complete, clear, and concise given what is known, and whether it meets applicable standards. Other useful checks include:

- Is each model element specific enough?

- Is there traceability between the components of the threat model?

- Are roles and responsibilities clearly assigned?

- Are assumptions and rationales captured in sufficient detail?

Although threat modeling can be done with whiteboards and paper, digital artifacts that can be stored in risk repositories add long-term value. Tools range from simple (PowerPoint diagrams, Excel spreadsheets) to dedicated platforms. Many organizations already use tools like Visio or Enterprise Architect to build the diagrams that answer “What are we working on?” Several open-source, commercial, and community tools also support diagramming, threat generation, methodology-based assessment, and mitigation suggestions. A few are listed in Table 2.

Table 2. Selected threat modeling tools.

| Tool | Methodology | Type | URL |

| Microsoft Threat Modeling Tool | STRIDE | Free | microsoft.com/en-us/securityengineering/sdl/threatmodeling |

| IriusRisk Threat Modeling Platform | Various | Commercial / community | iriusrisk.com/threat-modeling-platform |

| OWASP Threat Dragon | STRIDE, LINDDUN | Open source (desktop and web) | docs.threatdragon.org |

The Bottom Line

Threat modeling is no longer optional for connected medical devices. With the FDA’s June 2025 final cybersecurity guidance and the cyber device requirements under Section 524B of the FD&C Act, demonstrating a structured threat modeling approach is now part of what the Agency expects to see in premarket submissions. The four-question framework anchored by Data Flow Diagrams, STRIDE, Attack Trees, and the Cyber Attack Lifecycle gives manufacturers a defensible, integrated structure to do that work.

Whether you are early in development or preparing a 510(k), De Novo, or even a PMA submission, integrating threat modeling into your design controls and post-market processes is one of the highest-leverage investments you can make in both regulatory readiness and patient safety. If your team is building out this capability, Accurate Consultants can help you scope a threat model, align it FDA guidance and requirements, and integrate it into your Cybersecurity Risk Management Plan and Report. Please reach out to us in the Contact Us section of the site.

References

- MITRE Corporation and Medical Device Innovation Consortium. Playbook for Threat Modeling Medical Devices. November 2021.

- U.S. Food and Drug Administration. Cybersecurity in Medical Devices: Quality System Considerations and Content of Premarket Submissions. Final Guidance, June 27, 2025.

- Federal Food, Drug, and Cosmetic Act, Section 524B (added by the Food and Drug Omnibus Reform Act, December 2022).